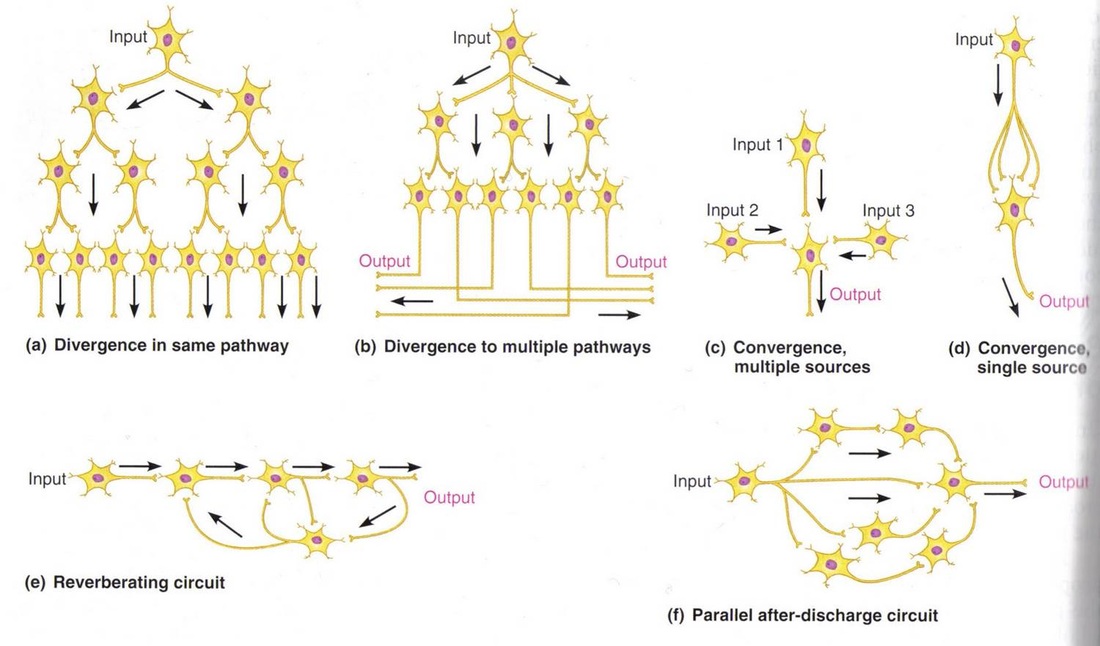

I've just been looking through the lecture slides that I made last year to teach about the difference between the neuronal way of looking at the brain and the symbollic way of looking at the mind. In order to highlight how much more difficult it is for us to work out what a neuronal system is doing, I use the following picture (which I found here) of small neuronal networks.

The challenge is to look at each network and say its function.

The challenge is to look at each network and say its function.

These are small networks and, as such, should not be particularly difficult to determine the function of. But still, the answer in each case is not immediately evident. This is because our minds are set up to think of functions, objects, etc as holistic and meaningful units, not maximally minimalistic homogenous units as above.

In fact, the above diagrams are insufficient to fully describe the functions of the networks. We also need to consider whether particular synapses/neurons are excitatory or inhibitory. This is evident if you look at (e), the Reverberating circuit.

In fact, the above diagrams are insufficient to fully describe the functions of the networks. We also need to consider whether particular synapses/neurons are excitatory or inhibitory. This is evident if you look at (e), the Reverberating circuit.

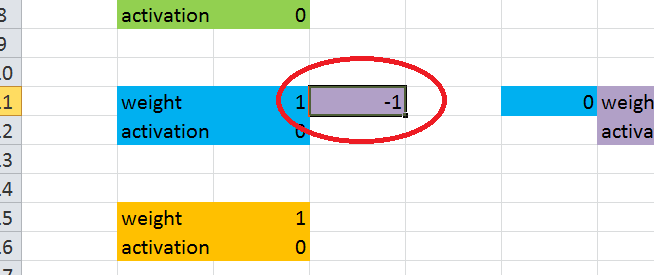

Try to imagine what this network is doing.

Let's suppose that all of the synapses are excitatory. A message at the input will pass along the chain of neurons, left to right. At the last neuron, the message will be output (perhaps having been either amplified or attenuated). However, the output neuron also feeds the same output message back to another neuron, which then loops the information back to the penultimate neuron in the chain. An interesting thing here is that the output at a given time is fed back to the network so that it will affect the next output. In other words, this network may be able to display behaviour that changes over time.

There are a few questions we can ask about this:

Let's suppose that all of the synapses are excitatory. A message at the input will pass along the chain of neurons, left to right. At the last neuron, the message will be output (perhaps having been either amplified or attenuated). However, the output neuron also feeds the same output message back to another neuron, which then loops the information back to the penultimate neuron in the chain. An interesting thing here is that the output at a given time is fed back to the network so that it will affect the next output. In other words, this network may be able to display behaviour that changes over time.

There are a few questions we can ask about this:

- If no input is applied, what will be the resulting output over a few timesteps?

- If a constant input is applied, what will be the resulting output?

- If a constant input is applied for a few timesteps, then removed, what will be the resulting output?

- If a transient input (just one timestep) is applied, then removed, what will be the resulting output?

|

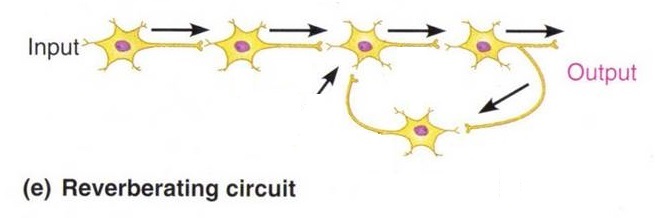

Here is the Excel file for this network.

Upload it and answer the questions by implementing them. | ||||||

Important!!! Because this network is recursive, the Excel file has circular references in it. Excel doesn't like this, so you have to make sure it is ready to deal with them. Like this:

File --> Options --> Formulas --> Enable Iterative Calculation --> Maximum Iterations = 1

This is given in more detail on this page.

To reset the network, simply change the input to something which isn't a number for one timestep, then switch it back to a number.

File --> Options --> Formulas --> Enable Iterative Calculation --> Maximum Iterations = 1

This is given in more detail on this page.

To reset the network, simply change the input to something which isn't a number for one timestep, then switch it back to a number.

With a transient input, the network goes into oscillation. I used an input of 1 for just one timestep, then set it back to 0 and refreshed the network (F9) a few times to observe the 1,0,1,0,1... behaviour. Oscillatory behaviour is a useful feature for a network - think of breathing, heart rate and walking as three things we do which are built upon oscillation.

However, there is something very undesirable in this network. When you applied a constant input (I did 1), you should have found that the network's output kept growing indefinitely. In a biological network, this would lead to your head exploding, or at least an epileptic fit. It works like the positive feedback that you hear if you put a guitar close to an amp. An alternative solution is negative feedback.

Try the same set of experiments again.

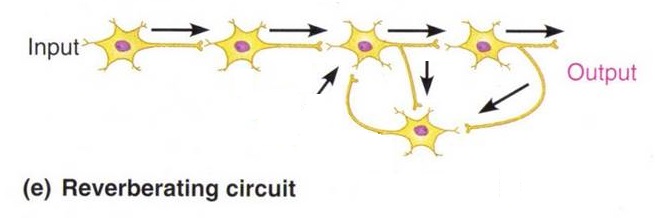

The unconstrained increase in activation is eliminated, so negative feedback has benefitted our network. However, another problem has popped up. Did you notice what happened when the network was presented with a transient input? Unfortunately, when the input is removed, the network stops oscillating. This may be desirable in some situations, like walking, but not in others, like the heartbeat. Let's remove this problem too.

The network below adds a single extra synapse from one on the neurons to the feedback neuron.

The unconstrained increase in activation is eliminated, so negative feedback has benefitted our network. However, another problem has popped up. Did you notice what happened when the network was presented with a transient input? Unfortunately, when the input is removed, the network stops oscillating. This may be desirable in some situations, like walking, but not in others, like the heartbeat. Let's remove this problem too.

The network below adds a single extra synapse from one on the neurons to the feedback neuron.

|

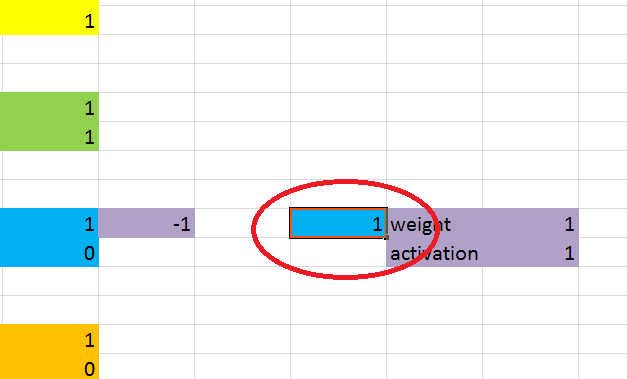

The implementation is simple. I've already set up the synapse, but left it with a strength of zero. Just change that strength to something non-zero, as shown on the right. Do you think a negative or positive value will work better? On the right, I've used a positive value, but try it with both (don't forget to reset the network between trials - this network has a memory, so you have to reset it to clear its memory).

It's worth noting that the blue neuron is an excitatory neuron, so it is more biologically plausible to use a positive value. However, it isn't impossible that different synapses of the same neuron have different behaviours. |